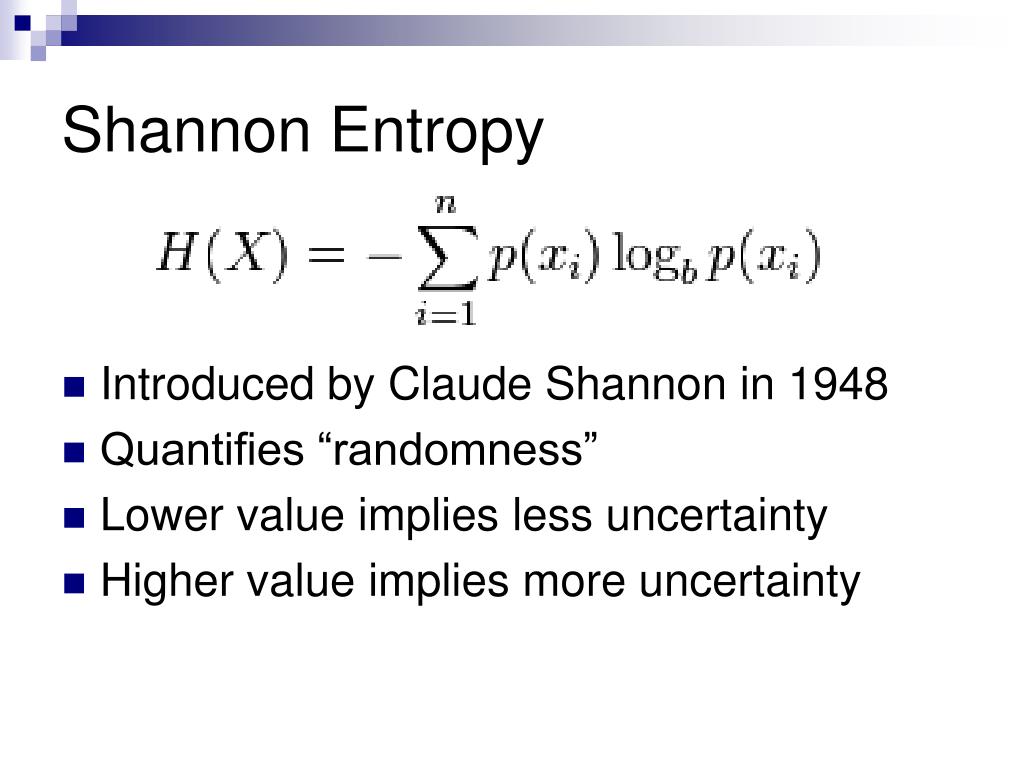

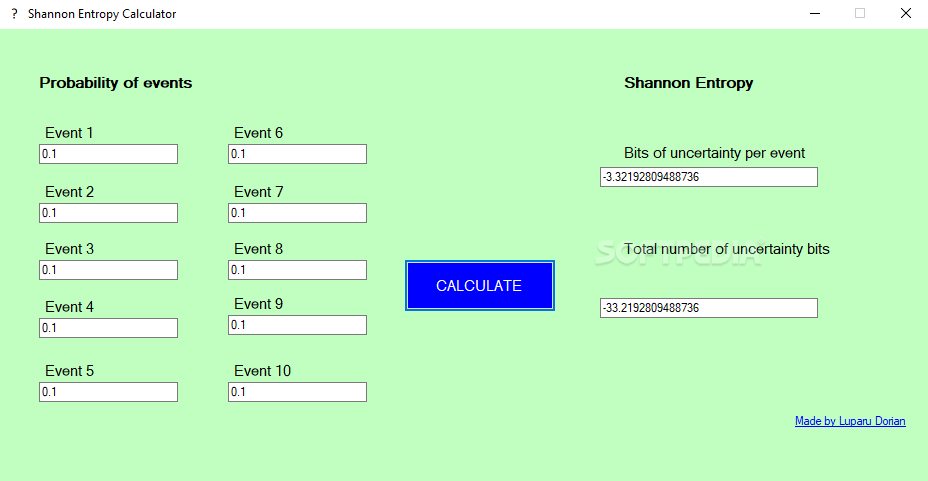

Shannon’s discovery of the fundamental laws of data compression and transmission marks the birth of Information Theory. The Shannon entropy was first introduced by Shannon in 1948 in his landmark paper A Mathematical Theory of Communication. number of bits) required to store the variable, which can intuitively be understood to correspond to the amount of information in that variable. More mundanely, that translates to the amount of storage (e.g. freqs estimates bin frequencies from the counts y. This number of arrangements won’t be part of the formula for entropy, but it gives us an idea, that if there are many arrangements, then. Claud Shannon’s paper A mathematical theory of communication 2 published in July and October of 1948 is the Magna Carta of the information age. At a conceptual level, Shannon's Entropy is simply the 'amount of information' in a variable. entropy estimates the Shannon entropy H of the random variable Y from the corresponding observed counts y. Applying this index to some non-benzonoids, linear and angular polyacenes also give satisfactory results and prove to be quite suitable for determining the local aromaticity of different rings in polyaromatic hydrocarbons. Number of rearrangements for the balls in each bucket. Also the values of the new index are evaluated for some substituted penta- and heptafulvenes, which successfully predict the order of aromaticity in these compounds. The Shannon entropy equation provides a way to estimate the average minimum number of bits needed to encode a string of symbols, based on the frequency of. Shannon’s concept of entropy can now be taken up.

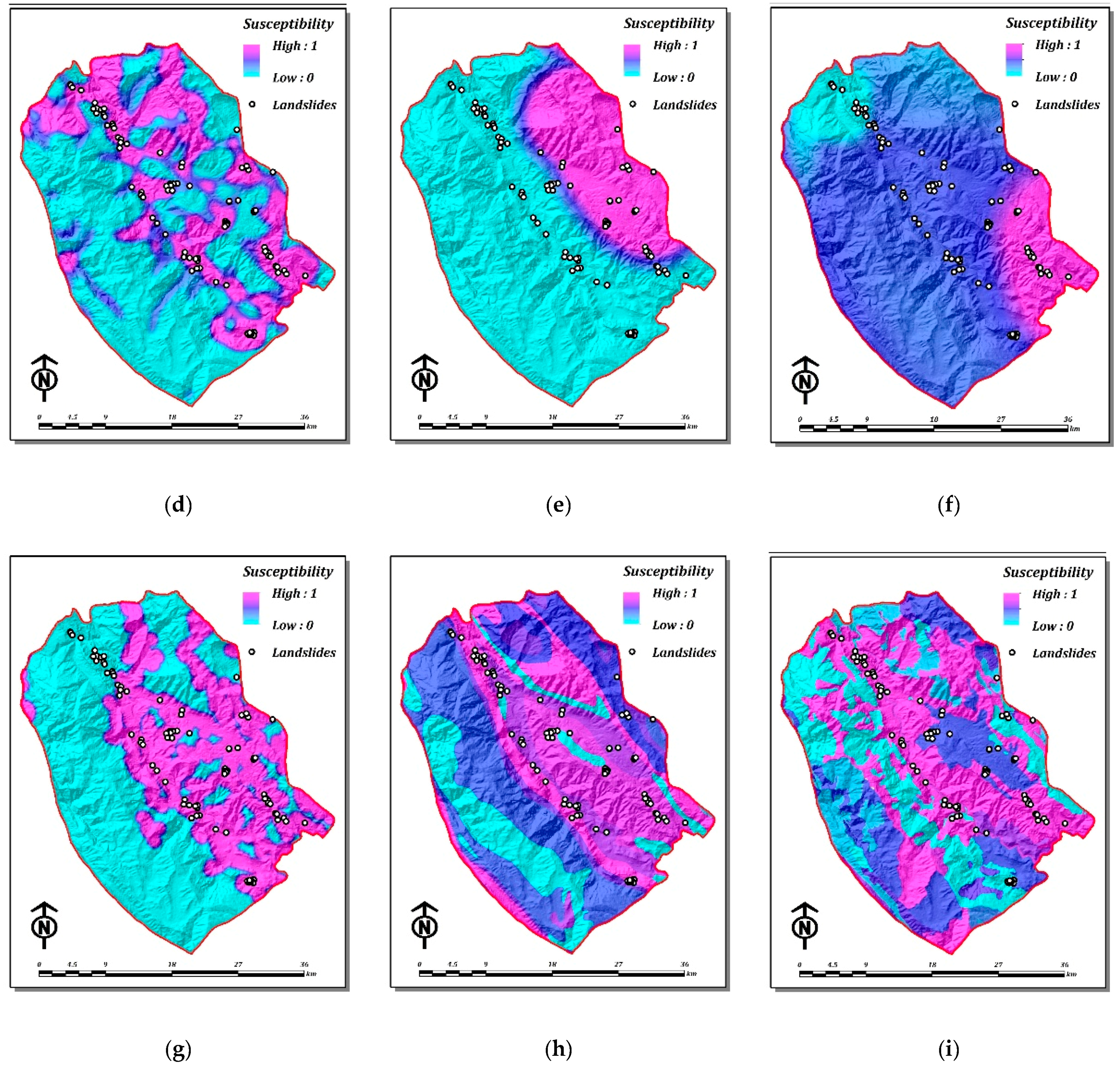

It is found that the least standard deviation between the aromaticities and the best linear correlation with the Hammett substituent constants are observed for the new index in comparison with the other indices. As such, Shannon entropy was not a precursory feature. Then, during the eruptive episode, Shannon entropy remained very low. Sharp drops, with the values approaching zero, occurred at the same time as (but not before) the caldera collapse and earthquake. Whereas the Shannon Entropy Diversity Metric measures this in terms of distribution of individual foods, MFAD measures this in terms of nutrients. Using B3LYP/6-31+ G** level of theory, the Shannon aromaticities for a series of mono-substituted benzene derivatives are calculated and analyzed. Figure 7c shows Shannon entropy and seismic energy from 29 April to. According to the obtained relationships, the range of 0.003 < SA < 0.005 is chosen as the boundary of aromaticity/antiaromaticity. Significant linear correlations are observed between the evaluated SAs and some other criteria of aromaticity such as ASE, Λ and NICS indices. Using B3LYP method and different basis sets (6-31G**, 6-31+ G** and 6-311++ G**), the SA values of some five-membered heterocycles, C 4H 4X, are calculated.

This index, which describes the probability of electronic charge distribution between atoms in a given ring, is called Shannon aromaticity ( SA). The concept of information entropy was introduced by Claude Shannon in his 1948 paper 'A Mathematical Theory of Communication', 2 3 and is also referred to as Shannon entropy. \sum_$ with probabilities $p_1, \dotsc, p_n$.Based on the local Shannon entropy concept in information theory, a new measure of aromaticity is introduced. ShannonHartley theorem v t e In information theory, the entropy of a random variable is the average level of 'information', 'surprise', or 'uncertainty' inherent to the variable's possible outcomes. The first means that entropy of tossing a coin $n$ times is $n$ times entropy of tossing a coin once: In short, logarithm is to make it growing linearly with system size and "behaving like information". Shannon entropy is a quantity satisfying a set of relations.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed